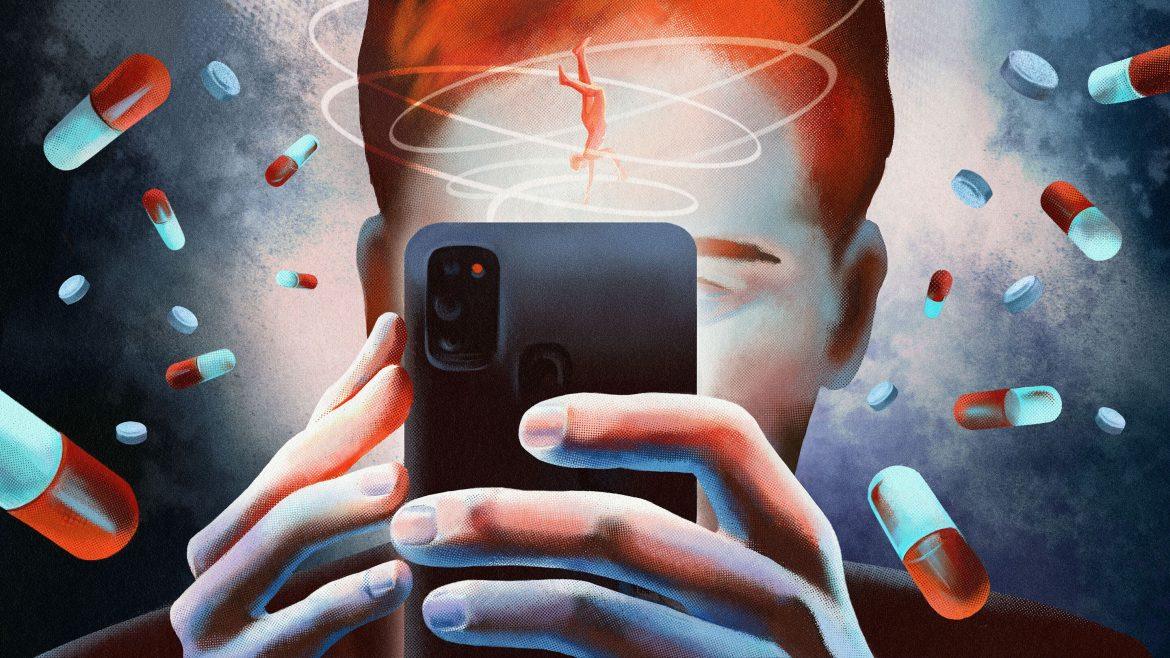

By most measures, the new GLP-1 pills are underwhelming. Earlier this month, the pharmaceutical giant Eli Lilly debuted a weight-loss tablet that is far less effective than its popular injectable counterpart, Zepbound. Oral Wegovy, which hit the market in December, can hold its own with the shot version—but it has to be taken on an empty stomach with fewer than four ounces of water. And both pills come with many of the same side effects as the shots, namely nausea and diarrhea.

As someone who’s currently on a GLP-1 injection, I still can’t wait to start taking the pills instead. No, I’m not afraid of needles. The injector pens that are used to administer GLP-1s are so quick and painless that sometimes I worry the needle didn’t actually go into my skin. But the shots are a nuisance nonetheless. They’re supposed to be taken on the same day every week, but I struggle to stick to the schedule. The injections also need to be refrigerated—which is especially a hassle whenever I travel. Last month, I visited relatives who don’t know that I’m on a weight-loss drug. I was too sheepish to have a conversation with them about it, so I left the pen in my toiletry bag and didn’t throw it in the trash until I got home.

This might all seem trivial, but a mere annoyance compounds when it must be repeated every week for the rest of your life. Even though many GLP-1 users hit a weight-loss plateau after several months, they have to continue injecting themselves to avoid gaining the weight back. “Over time—particularly as patients transition from the active-weight-loss phase to long-term maintenance—the psychological burden of ongoing injections can become more apparent,” Akshay Jain, a clinical instructor at the University of British Columbia, told me. (Jain has consulted for both Eli Lilly and Novo Nordisk.)

[Read: The Ozempic plateau]

This may be where the true value in weight-loss pills lies. Patients may be able to use the injections to actually lose weight, and then lean on the tablets to keep it off. The pills can be downed with a swig of water just like statins, SSRIs, and so many other pharmaceuticals that are already part of people’s daily routine. On that front, Eli Lilly’s pill, sold under the brand name Foundayo, is especially notable. For most people, the drug doesn’t come with fussy rules about when it should be taken. Doctors I spoke with were enthusiastic about the idea of switching some patients to pills because it allows them to feel as if they’ve made meaningful progress toward normalcy.

So far, doctors just haven’t had very good options for GLP-1 patients who are looking to maintain their weight loss. Some have attempted to switch patients to older weight-loss pills. Others have suggested that patients space out injections to every few weeks. But neither strategy is foolproof. The older drugs have been successfully tested as a way to maintain weight after bariatric surgery, but they haven’t been studied among people coming off of GLP-1s, David Cummings, a professor at the University of Washington, told me. Anecdotal evidence suggests that the method may not be all that effective. “I have a lot of patients that regain all of their weight,” Catherine Varney, the director of obesity medicine at UVA Health, told me. (Varney has done paid speaking gigs for Eli Lilly.) The tactic of taking a GLP-1 shot less frequently, meanwhile, hasn’t been tested in a large randomized clinical trial.

By contrast, a recent clinical trial by Eli Lilly tracked nearly 400 subjects who switched from shots to Foundayo, and found that on average, they maintained most of their weight loss after 52 weeks. (The study has not yet been published in a peer-reviewed journal.) A comparable study hasn’t been done with oral Wegovy, but Novo Nordisk, the company that manufactures the drug, believes that patients should be able to switch from the Wegovy shot without affecting their weight. Because the two drugs are made with the same molecule, “there’s no reason to think that there’d be any difference,” Andrea Traina, the senior medical director for obesity at Novo Nordisk, told me. Doctors told me that they have been encouraged by patients who have already switched from shots to oral Wegovy. “Initial anecdotal reports are promising, but we still need more long data to form conclusions,” Katie Robinson, an assistant professor at the University of Iowa, told me.

All of this comes with a significant caveat. These pills may not remedy the biggest reason people stop taking GLP-1s: price. The new tablets are cheaper than the shots, but they’re still not cheap. If you pay out of pocket, the lowest dose of Foundayo costs $149 a month, which is about half the price of the cheapest version of Zepbound. The Wegovy pill also starts at $149, compared with $199 for the injection. Again, this is $149 every month indefinitely—it quickly adds up. With insurance, the cost of these drugs can be much lower, but few people can count on that. A recent survey of employers found that only about one in five insurance plans at large companies covered GLP-1s for weight loss. The situation gets especially tricky for older Americans. Medicare is technically banned by law from covering weight-loss drugs, though the Trump administration is piloting a program to provide beneficiaries with access.

[Read: The obesity-drug revolution is stalling]

There is hope that the price of Foundayo, in particular, will drop over time because of the way it is manufactured. Unlike all of the other GLP-1s on the market, it is not a peptide—a class of drugs that mimics hormones in the body—which makes it much simpler to produce. But even if the price plummets, not everyone may be as eager to switch to a pill as I am. Everyone who takes a GLP-1 has their own issues that affect whether they stick with their regimen. For some, the once-weekly injection may be more convenient than daily pills; others may want drugs that come with fewer side effects; and still others may just decide to take whatever is cheapest and does the job.

So much of the attention surrounding GLP-1s has been on their remarkable efficacy at shedding weight—and the search for drugs that are even better. Retatrutide, a much-hyped injection that is in the works, can apparently lead to nearly 30 percent loss in body weight on average. But options that lessen the burden of taking a forever drug may matter even more.